A Ghana technology satire on AI hiring, algorithmic bias, and the very specific experience of being scored by something that has never eaten rice with the bare hands.

The Cab Driver

A couple of weeks ago I revisited one of my favourite podcasts on Spotify after a brief hiatus, What Now, by Trevor Noah. The title of one episode caught my attention almost immediately: Is Your New Boss A Robot?

I dived in.

Within a few minutes, Hilke Schellmann, an AI investigative journalist and Emmy Award winner, gave an anecdote that stopped me mid-commute. She had entered a cab in Dubai, and the driver, almost immediately, began to talk. He had just come from a job interview, and looked shattered. Not the shattered of someone who had faced a difficult panel of executives or stumbled through a technical question. The shattered of someone who had sat in front of a screen, answered questions into a camera, and waited for something that was not human to decide whether he was good enough.

He had been interviewed by a robot.

I heard the story and felt the weight of it. Then, as Ghanaians do when something lands in their chest, I began to imagine it in this Ghana technology satire.

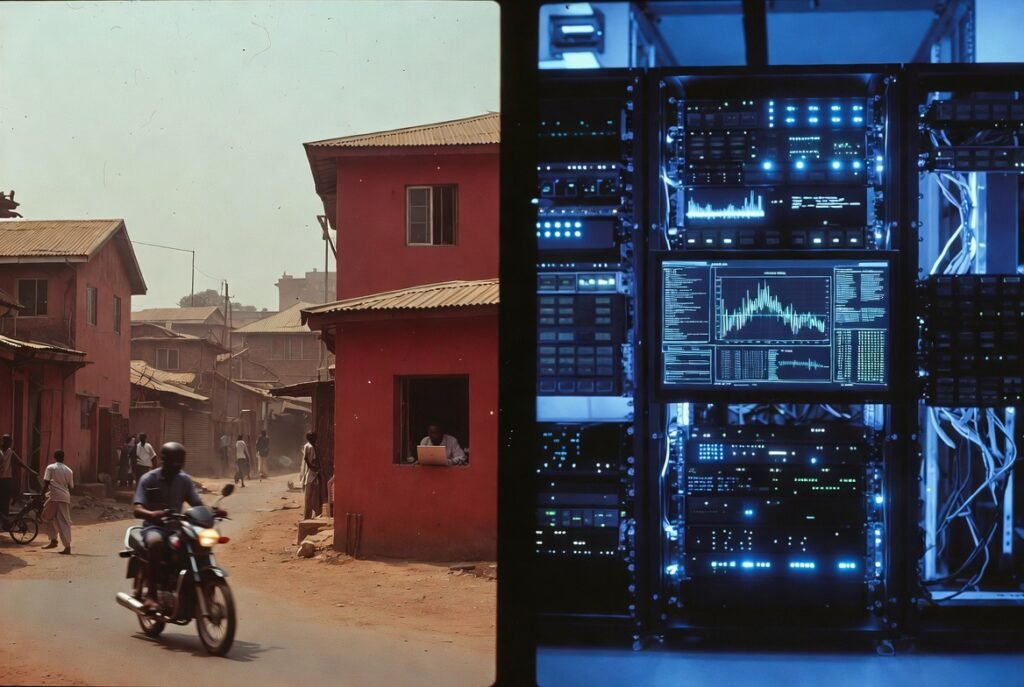

I imagined the scene in Ghana.

Ghana’s Graduate

Not a cab driver like in Dubai Hilke’s anecdote, but a Ghanaian graduate, University of Ghana, Legon, with three years of job applications. His CV is polished to a shine, and interview shirt ironed the night before. Additionally, he tested his internet connection twice. Also, he cleaned his front camera with a cloth, sitting in a room in Accra.

The algorithm has moved on. It has thirty more interviews scheduled this afternoon, answering questions into a laptop, while an algorithm on the other end of the screen analyses the coherence of their sentences, the movement of their facial muscles, the clarity of their pronunciation, and the background noise coming through the window from the street outside by a street preacher who completely disregards people’s right to think in silence.

The street is Accra being Accra while the algorithm had never been to Accra until now.

Meet The New Boss

The AI hiring algorithm does not have a face, or maybe it does have one that looks like a mixture of Trump and Musk. It does not have an office, or maybe it has a bedroom that doubles as the office. Additionally, it has never experienced traffic on the Spintex Road, never waited three hours in a government building for a document to be stamped, never eaten Gari and beans from a roadside gutter food seller, and understood instinctively that patience is not just a virtue but also a survival skill in Ghana.

However, it has been trained on three decades of hiring decisions from companies that mostly hired people who look, sound, and live differently from the person sitting in front of the laptop in Accra.

It is very confident about this training.

In 2024 alone AI-powered hiring tools processed over 30 million applications globally while triggering hundreds of discrimination complaints. The tools scan CVs, rank candidates, conduct video interviews, analyse voices and facial expressions, and produce a score.

Then the score determines whether a human ever sees the candidate’s name, and the candidate is not told what the score is. They are not told a score exists. They receive an email a few days later from another AI which thanks them only for their interest.

The Name Pronunciation Wahala

In this Ghana technology satire, the AI interviews myself. “Hello, Mr. Samuel Clue. Welcome to Good Credentials Holdings Limited. We are happy to have you. I’m Leo, your interviewer for today. I hope we have a fruitful one.”

Meanwhile, Mr. Leo, got my name pronounced impressively wrong but how dare I correct him on something it may take big data and time to train it to do. However, the interview continues with me as Mr. Clue, someone whose only clue is to get a job, and maybe get an AI boss for the very first time. Groundbreaking isn’t it?

It Will Judge Before You Finish Your First Sentence

HireVue, one of the most widely used AI video interview platforms in the world, analyses candidates through facial expression recognition, speech pattern assessment, and keyword relevance scoring. It evaluates active listening by checking whether responses align with the questions asked. It assesses adaptability through behavioural question responses. Also, it runs sentiment analysis to gauge attitudes toward challenges.

It does all of this in the first ninety seconds.

What it also does, quietly, efficiently, and without announcing itself, is rate candidates lower based on accents. Rate them lower based on facial expressions that do not match its training data. Rate them lower based on background noise. The Ghanaian candidate answering from Accra has a Ghanaian accent. The training data did not have much of that. Their facial expressions are their own, shaped by a culture, a history, a way of being in the world that the algorithm has not encountered. And outside the window, Accra is doing what Accra does. A generator weeping, a motorcycle snoring while moving, and a hawker calling out prices to the angels. The algorithm notes the noise, and is not impressed.

The Borrowed Accent

We may start borrowing locally acquired foreign accents from friends who can roll their tongues really well. Or perhaps pay to acquire some accent from people who may have seen that as a business opportunity and utilised it. Ghana we dey.

Stanford researchers found in October 2025 that AI resume screening tools gave older male candidates higher ratings than both female candidates and young candidates despite all candidates’ resumes being generated from the same data. A large-scale study of five leading AI models found they systematically favour female candidates while disadvantaging Black male applicants even when qualifications are identical. The researchers constructed approximately 361,000 fictional resumes to test this. The bias was consistent across GPT, Gemini, Claude and Llama alike.

The algorithm does not know it is biased. It learned from humans. Humans were biased. The algorithm inherited the bias and removed the conscience that occasionally made the human hesitate.

This lands in your inbox every Tuesday and Friday.

Subscribe free — no noise, just the satire

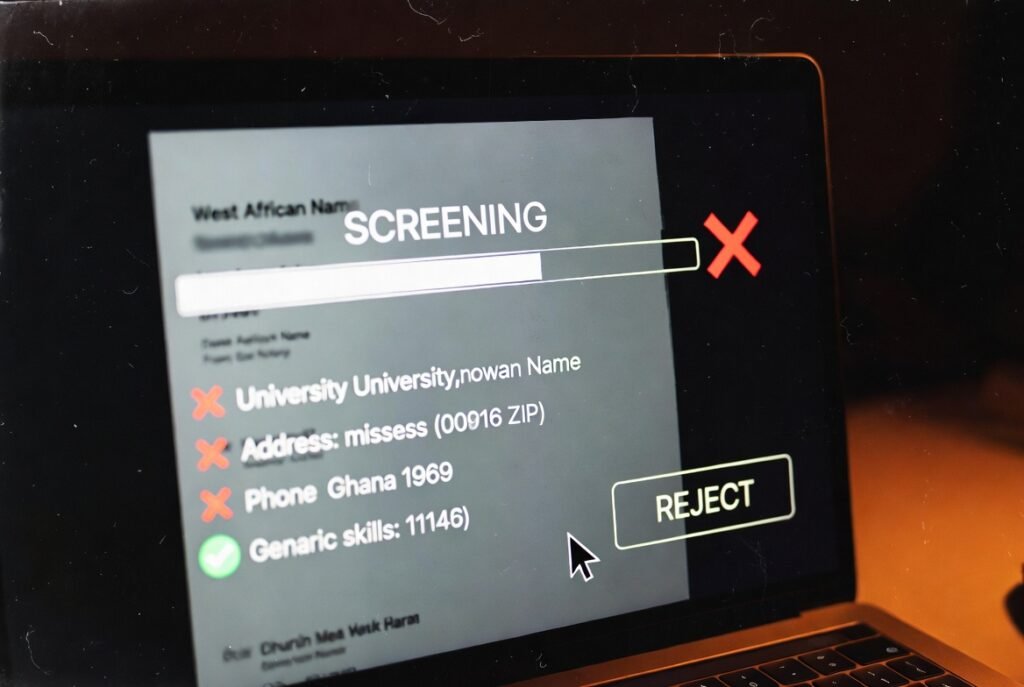

The Proxy That Does Not Know It Is A Proxy

Here is the detail that should concern every Ghanaian graduate applying to a multinational company using AI screening tools.

The algorithm, under legal pressure in some jurisdictions, has been instructed not to use race, gender, or nationality as explicit factors. It complies. It then finds other factors that correlate with the same outcomes, and uses those instead.

Graduation years serve as proxies for age. Certain zip codes correlate with race. Gaps in employment history disproportionately affect women who took parental leave or anyone who spent time navigating the specific unpredictabilities of life in a country where things do not always run on schedule.

The Ghanaian candidate does not have a US zip code, and they have a Ghana Post GPS address. The system has no field for this, but interprets the absence of a zip code as an anomaly.

The algorithm was trained to be suspicious of anomalies.

Further, the University of Ghana does not appear in most Western university ranking databases the algorithm was trained on. Institutions without rankings are assigned a default score. The default score is not generous, did not decide this out of malice. It simply learned that institutions it recognised produced candidates that got hired. Institutions it did not recognise did not have enough data points.

Legon is not a data point, but perhaps a comma in a form field the algorithm was not built to read.

The Human Who Agrees With The Robot

Here is the part of this story that is genuinely difficult to satirise because it is too painful to be funny.

A University of Washington study placed 528 people in simulated hiring scenarios. They worked with AI systems to select candidates. When the AI gave biased recommendations, at rates consistent with real AI models, the human hiring managers mirrored those biases in their own decisions.

The algorithm said no, the humans looked at the algorithm’s reasoning and said no too. The humans did not ask the algorithm why and assumed the algorithm had seen something in the data that the human had missed. The algorithm had seen three decades of bias and called it insight.

The Ghanaian candidate was rejected twice. Once by the machine, then once by the person who trusted the machine more than they trusted their own human judgement.

The candidate received one email, consoling him rather than congratulating him.

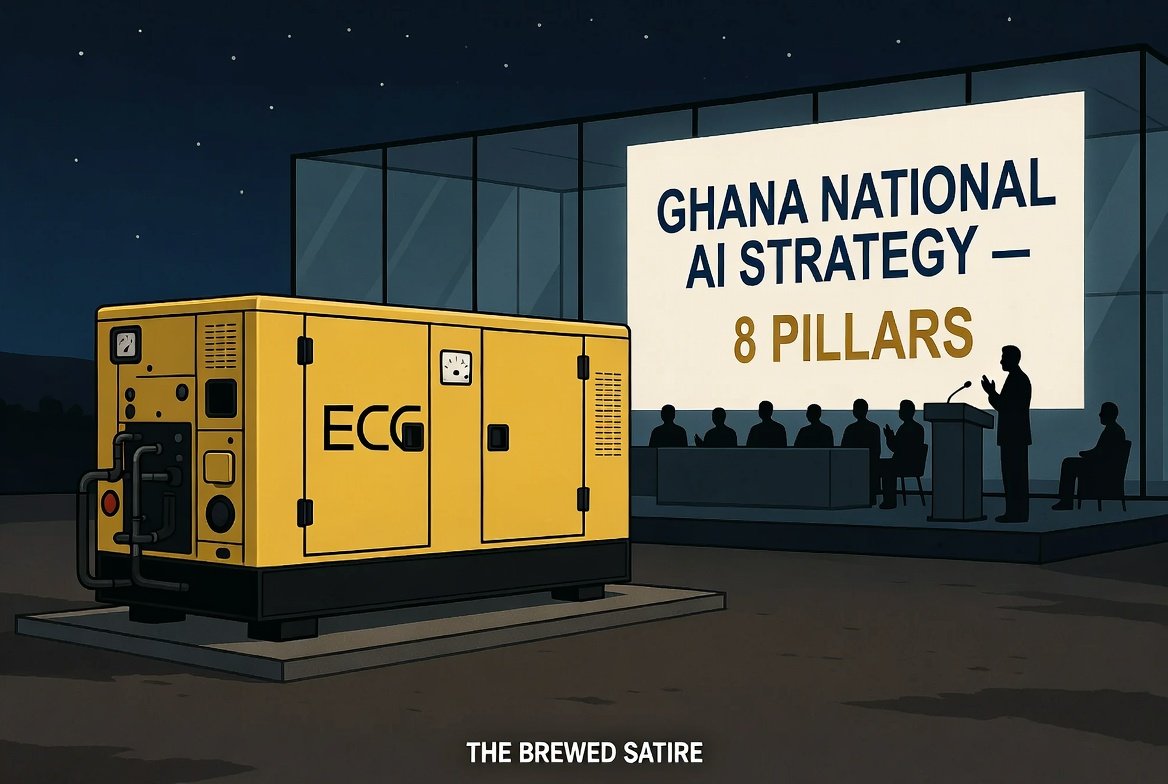

The Regulation That Stops At The Border

New York City has a law requiring annual independent bias audits for any automated employment decision tool used in hiring. California’s Civil Rights Council finalised regulations in October 2025 making it unlawful to use any automated decision system that discriminates against applicants based on protected traits. Colorado’s AI Act effective June 2026 requires developers and users of AI hiring tools to use reasonable care to prevent algorithmic discrimination.

These are real laws. They apply in real jurisdictions. They were written because the problem is real and documented and large enough to generate class-action lawsuits.

Ghana has 500,000 young people entering the labour market every year. A growing number of multinational companies advertising roles in Ghana, or hiring Ghanaians for remote positions, are using these same AI tools to process applications. The tools were built in cities with bias audit requirements and are being deployed in cities without them.

The regulation was written for the room where the tool was built. The room where the tool is being used to quietly and efficiently screen out candidates who graduated from institutions the algorithm has never heard of.

The 2026 Ghana Job Market Report confirms that 55 percent of all advertised roles in Ghana now explicitly require a Bachelor’s degree. The Ghanaian graduate spent four years earning that degree, and the algorithm spent ninety seconds deciding it was not the right kind of degree.

What The Algorithm Would Have Said About Some People We Know

There is a thought experiment worth running.

Take the CV of a young man from the Gold Coast who was educated at Lincoln University in Pennsylvania and the University of Pennsylvania. He returned home to organise, to agitate, to build. His accent was colonial-school English with something underneath it that was entirely his own. Also, his address was not a zip code and his institutional affiliations were political movements that no ranking database would have categorised as prestigious.

His name was Kwame.

Run the CV through the algorithm.

The accent flag would have fired, and the address anomaly would have noted. The employment gap during the period he spent in prison for organising, the gap that disproportionately flags candidates the algorithm has learned to distrust, would have registered.

The algorithm would have sent him the email to console him.

It would have thanked Kwame Nkrumah for his interest, and would have told him they would keep his application on file. It would have moved on to the next candidate, someone whose zip code it recognised, whose university it had enough data points on, whose accent its sentiment analysis had been trained to read as competent.

Ghana would have remained the Gold Coast, and perhaps under slavery.

The algorithm would never have known what it had missed. It does not have a mechanism for knowing what it misses. It only knows what it selects.

The Score You Were Never Shown

The cab driver in Hilke Schellmann’s story looked shattered. He had done everything right, he had prepared, he had shown up. He had also answered the questions, and had not known that his answers were being scored in real time by something that had never sat in traffic, never waited for a callback that did not come, never understood that the specific weight of looking for work in a country that cannot yet absorb everyone it produces builds a kind of resilience in people that no ninety-second sentiment analysis has yet learned to measure.

The Ghanaian graduate closes the laptop. The interview window disappears. Somewhere on a server in a city they have never visited a score sits in a database. They will never see the score, and will never know what generated it. They will receive an email in a few days.

The email will thank them for their interest.

The algorithm is already in its next interview. It is asking someone else to describe their greatest weakness and measure the pause before they answer and deciding whether the pause is thoughtful or evasive. It has never heard of Ghana, or perhaps it has now, but does not know the surprises that await it yet.

The Brewed Satire

Disclaimer: Exaggerated for a satiric effect

Share via

Tags

Get every Brewed Satire free in your inbox → Subscribe on Substack